Every role is a building. Know which walls are load-bearing before you renovate.

Most organizations automate by job title. The ones that succeed automate by judgment type. This framework shows you the difference, and why it matters for every workforce decision you make with AI.

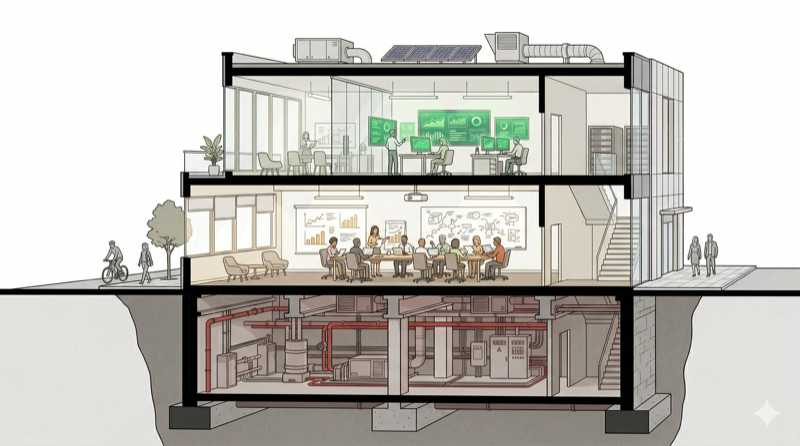

Three layers of judgment

AI is phenomenal at one type of judgment. It struggles with the second. It cannot see the third. The failure to distinguish between them is the root cause of most AI workforce disasters.

Judgment encoded in systems

Data in databases. Patterns in records. Structured decisions with clear feedback loops. This is where AI excels. It processes volume faster, catches patterns humans miss, and scales without fatigue.

Judgment that requires interpretation

AI can surface the inputs but cannot make the call. These decisions require calibration, reading the room, understanding what the data doesn't say.

Judgment you don't know exists until it's gone

Relationships. Institutional memory. The informal signal layer that only comes from being embedded in a system over time.

Two rules that change the calculus

Before you automate any role, internalize these. They explain why the most confident automation decisions often produce the worst outcomes.

The Volume Trap

When someone tells you AI handles most of a function, ask the follow-up: most of the volume, or most of the consequences?

Volume and consequence are different distributions. Pareto observed this a century ago: a small share of inputs drives a disproportionate share of outcomes. AI amplifies the asymmetry. A customer service bot resolves thousands of routine inquiries. The cases it cannot resolve -- the escalations, the edge cases, the moments of genuine distress -- carry disproportionate weight.

The Bottleneck Principle

One load-bearing invisible component makes an entire role unsafe to fully automate. It does not matter how many components are safe. Structural integrity depends on the weakest critical point.

This is why partial automation consistently outperforms full replacement. You are not optimizing a spreadsheet. You are renovating a building while people are living in it.

Three gates before you automate

Every automation decision should pass through these checkpoints. Skipping any one has produced predictable, well-documented failures.

Values alignment

What values govern these decisions? Has anyone articulated them in a form an AI system can operationalize? If the answer is no, you are deploying a system without a compass.

Liability exposure

If AI gets this decision wrong, what is the worst-case damage? Map it. Price it. Assign ownership. If no one owns the failure mode, no one will catch it.

Escalation path

When AI encounters a case it cannot handle, what is the human path? If there is no escalation path, there is no safety net. Only a delay between the failure and the discovery of the failure.

The confidence problem

The most dangerous characteristic of modern AI is not that it makes mistakes. It is that it makes mistakes with certainty.

A human expert who is unsure will hesitate, qualify, ask a colleague. An AI system that is wrong will present its answer with the same tone and confidence as when it is right. This asymmetry is the source of a new category of organizational risk.

Ellis George & K&L Gates. AI generated complete legal citations for cases that did not exist. The system presented fabricated case law with full confidence. The attorneys trusted it. The court sanctioned them $31,100.

AI plagiarism detectors. A Stanford study found seven AI detection tools flagged writing by non-native English speakers as AI-generated 61% of the time. High confidence. Systematic bias. The detectors almost never made the same mistake with native speakers.

Air Canada. The system predicted refund decisions with high confidence. The predictions were wrong. The company absorbed the cost. The algorithm did not.

Applied analysis

The framework's value is predictive. These cases show what happens when organizations ignore the architecture, and what happens when they respect it.

The architecture collapse

Eighty percent of the workforce was eliminated without understanding the judgment architecture underneath. Content moderation, infrastructure reliability, advertiser relationships, regulatory compliance. Each team appeared overstaffed when evaluated in isolation.

They were not independent systems. They were connected by invisible judgment. Remove the walls that don't appear structural on a spreadsheet. Watch the building come down.

The collaboration architecture

Markel deployed Cytora AI to process applications and flag risks automatically. They did not eliminate underwriters. They redirected them. Underwriters now focus on complex cases, relationship management, and the judgment calls that require contextual and invisible knowledge.

AI handles visible judgment. Humans handle the rest. The building stays standing.

Stop renovating blind

If you're making AI workforce decisions, let's talk about what the judgment architecture looks like inside your organization.